Measuring Model Biases in the Absence of Ground Truth

Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society (Proc. of AAAI/ACM Conference on AI, Ethics, and Society) 2021

Abstract

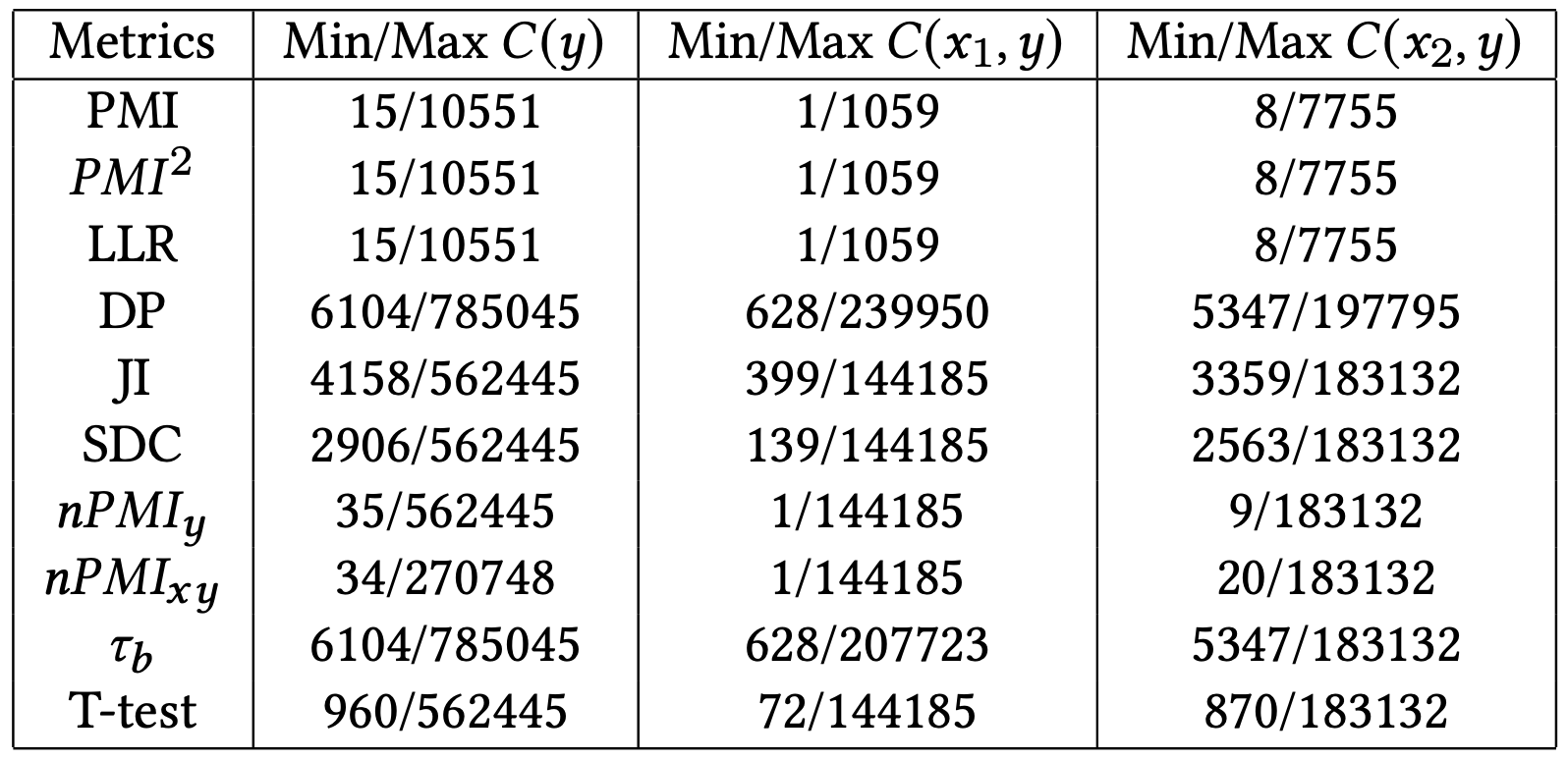

Recent advances in computer vision have led to the development of image classification models that can predict tens of thousands of object classes. Training these models can require millions of examples, leading to a demand of potentially billions of annotations. In practice, however, images are typically sparsely annotated, which can lead to problematic biases in the distribution of ground truth labels that are collected. This potential for annotation bias may then limit the utility of ground truth-dependent fairness metrics (e.g., Equalized Odds). To address this problem, in this work we introduce a new fram- ing to the measurement of fairness and bias that does not rely on ground truth labels. Instead, we treat the model predictions for a given image as a set of labels, analogous to a “bag of words” approach used in Natural Language Processing (NLP). This allows us to explore different association metrics between predic- tion sets in order to detect patterns of bias. We apply this approach to examine the relationship between identity labels, and all other labels in the dataset, using labels associated with male and female) as a concrete example. We demonstrate how the statistical proper- ties (especially normalization) of the different association metrics can lead to different sets of labels detected as having "gender bias". We conclude by demonstrating that pointwise mutual information normalized by joint probability (nPMI) is able to detect many la- bels with significant gender bias despite differences in the labels’ marginal frequencies. Finally, we announce an open-sourced nPMI visualization tool using TensorBoard.

BibTeX

@article{aka2021measuring,

title={Measuring Model Biases in the Absence of Ground Truth},

author={Aka, Osman and Burke, Ken and B{\"a}uerle, Alex and Greer, Christina and Mitchell, Margaret},

year={2021},

journal={Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society (Proc. of AAAI/ACM Conference on AI, Ethics, and Society (2021))},

doi={10.1145/3461702}

}