Leveraging Self-Supervised Vision Transformers for Neural Transfer Function Design

IEEE Transactions on Visualization and Computer Graphics 2024

Abstract

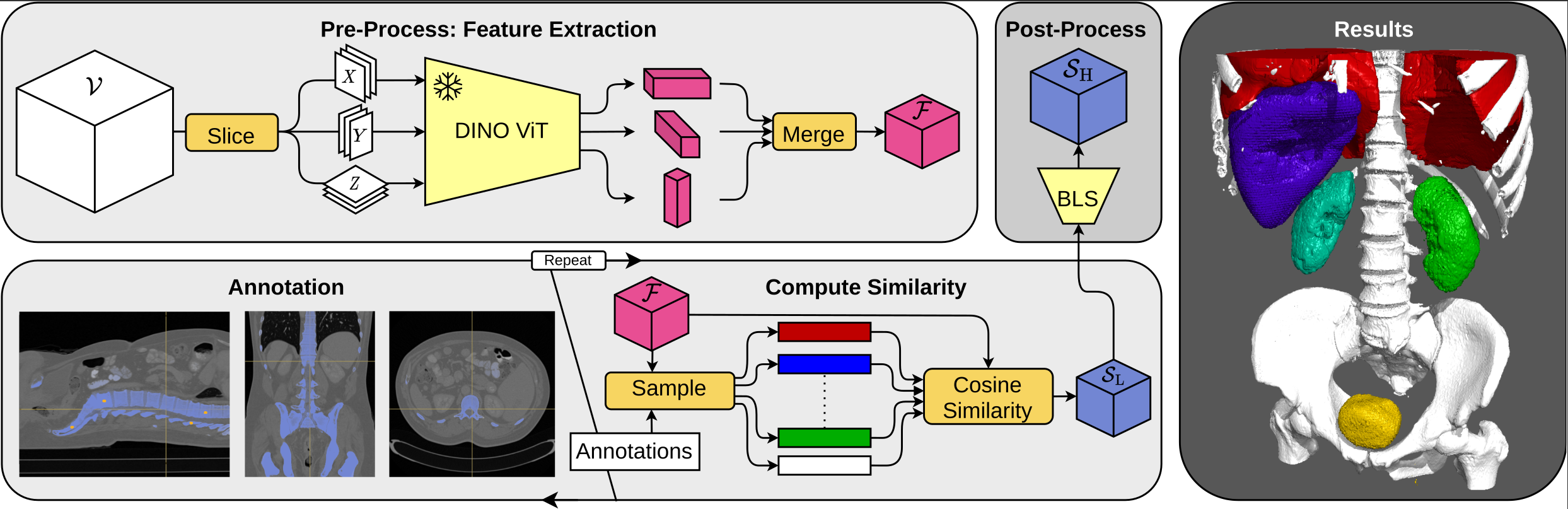

In volume rendering, transfer functions are used to classify structures of interest, and to assign optical properties such as color and opacity. They are commonly defined as 1D or 2D functions that map simple features to these optical properties. As the process of designing a transfer function is typically tedious and unintuitive, several approaches have been proposed for their interactive specification. In this paper, we present a novel method to define transfer functions for volume rendering by leveraging the feature extraction capabilities of self-supervised pre-trained vision transformers. To design a transfer function, users simply select the structures of interest in a slice viewer, and our method automatically selects similar structures based on the high-level features extracted by the neural network. Contrary to previous learning-based transfer function approaches, our method does not require training of models and allows for quick inference, enabling an interactive exploration of the volume data. Our approach reduces the amount of necessary annotations by interactively informing the user about the current classification, so they can focus on annotating the structures of interest that still require annotation. In practice, this allows users to design transfer functions within seconds, instead of minutes. We compare our method to existing learning-based approaches in terms of annotation and compute time, as well as with respect to segmentation accuracy. Our accompanying video showcases the interactivity and effectiveness of our method.

Bibtex

@article{engel2023leveraging,

title={Leveraging Self-Supervised Vision Transformers for Neural Transfer Function Design},

author={Engel, Dominik and Sick, Leon and Ropinski, Timo},

year={2024},

journal={IEEE Transactions on Visualization and Computer Graphics},

doi={10.1109/TVCG.2024.3401755}

}